The next time you speak to an NHS healthcare professional it is quite likely that your conversation will be recorded before being transcribed and summarised for your medical record using AI provided by a private company. Other AI will be reading your hospital records and summarising your clinical letters. If you complain about your treatment, AI will probably be used to write a reply. It will be used to interpret your scans and blood results and plan your appointments. AI in the NHS is suddenly everywhere, all at once.

From £330m deals with Palantir to run the NHS Federated Data Platform to contracts between GPs and ambient scribe vendors, the scope is already too wide to clearly comprehend. Likewise, most clinicians like me who are using it have very little understanding about how it works, how much it costs, what risks it entails or who is profiting. Crucially, we have no idea whether it can improve quality, safety, equity, access or the experiences of staff or patients. We’re like kids with a new toy, too enthralled to ask questions.

‘The quintuple aim for improving healthcare includes: improved health outcomes, enhanced patient experience, reduced costs, professional wellbeing, and health equity. Health equity is defined as ‘the state in which everyone has the opportunity to attain their full health potential and no one is disadvantaged from achieving this potential because of social position or other socially determined circumstances’ (Nundy et al 2022)

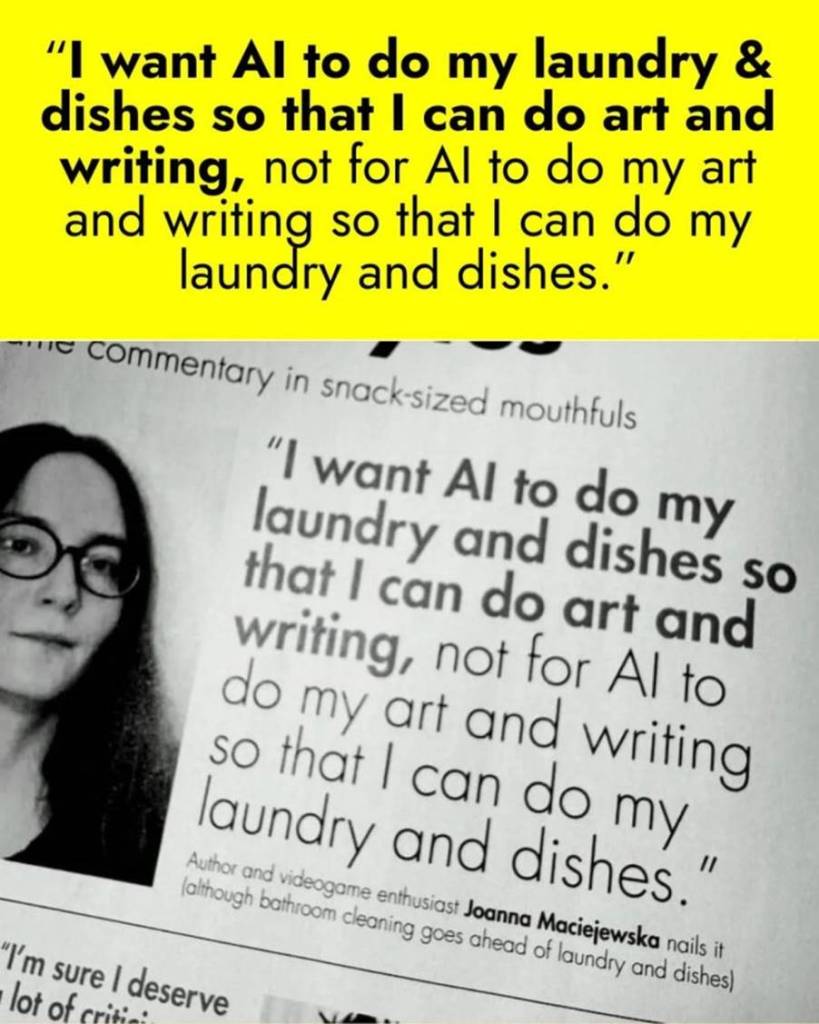

Like the laundry and the dishes, I want AI to take action on the social determinants of health, but all it wants to do is listen to my consultations. Perhaps the quintuple aim lacks profitability.

NHS England are clearly enthralled by AI (they are mostly of a certain age and health status after all) and more concerned with how to allay fears so that they can roll it out as far and as fast as possible [i]. While certain risks are acknowledged including racial profiling, IT capacity, and mistakes (so called ‘hallucinations’), there are few safeguards and they have nothing to say about the human, environmental, or political risks.

There was a surge in enthusiasm in September 2025 when Great Ormond Street Hospital published (‘announced’ is more accurate, since the actual trial has not been published) the results of a trial of AI technology that showed that AST (Ambient Scribe Transcription or AVT, Ambient Voice Transcription) used in outpatient departments meant that clinicians spent almost 25% more time with patients at the same time as having 8% shorter appointment times. In A&E settings 13.4% more patients were seen per shift. Such extraordinary results surely demand transparency over the details of the study and a cautious reception, but instead, ‘AVT will allow us to reimagine healthcare,’ says NHS England’s CCIO (Alec Price Forbes). Forbes told delegates at the HETT leadership summit that AI scribing software is key to the ‘digital by default’ model of care and that the NHS must ‘fundamentally reimagine how care is designed, delivered, and experienced’. He stressed that the problem isn’t whether AVT is technically feasible but how it will be deployed at scale.

I have recently started trialling AVT in my practice – I’m a GP in east London. The AI ‘listens’ to the consultation and then generates a summary of the transcription for the medical record. It is supposed to allow the GP to spend more time listening to the patient and less time looking at the computer screen while producing a more comprehensive and accurate record.

When I started working as a GP I would have 18 patients booked face to face in a half-day session. Some were complex and took longer than 10 minutes, some were straightforward and took less time, and some didn’t turn up. Overall, I would usually run late because of the added complexity of working in a deprived area where almost every physical complaint is mixed up compounded by social problems, long-term mental illnesses, chronic pain, and many patients who didn’t speak English as a first language. In recent years we have moved to a system of total triage where every patient request is screened by a doctor before being given an appointment. Overall, it has been good for continuity for patients with long-term conditions and improves quick access for patients with urgent issues. But it has meant that only complex patients are booked with me and days when I am triaging can mean making decisions about over 200 patient requests in a single half-day session. Triage has made the work more intense and more complex.

Typing up consultation notes after each appointment is a precious moment to reflect on what was said, what was seen and what was felt, emotionally as well as in a physical examination. It is a judgement about what it all meant. When AVT generates a summary of the transcript of the consultation it is as if it is making a judgment about what to include and what to leave out. But AI can only give the illusion of judgment, it has no morality or empathy. It simply predicts what seems most likely that an average doctor would want to include. What remains ought to have salience – which is to say that the words that are selected to represent the encounter stand for what was most important, at least to the clinician, and hopefully too, for the patient. When I look back at consultations written by AVT I cannot work out what mattered. The salience is missing.

AI predicts that we will ask certain things like, ‘was your sputum blood-stained?’, ‘was there blood in your stool?’, or ‘did your chest pain radiate to your neck or left arm?’ and occasionally adds these details even if you never mentioned them. It’s like having your lawyer edit your consultations.

We must click a box to confirm that we have reviewed the AVT summary and we can edit it to add our own judgement and humanity, which I do. So far it is entirely indifferent to coding which means filing information under a problem title which links to a clinical code. To be fair so are many doctors, but I am not, so that is something else I must edit. If you want AVT to save you time, which is surely the main point of it, then you need to take the path of least resistance. This must have been what the GOS doctors did, especially given their version of AVT was several generations before mine.

AVT is particularly poor at predictions when patients are distressed, confused, skip from one topic to another and don’t speak English as a first language. Thanks to Total Triage, these are most of my consultations. I am not inclined to keep using it, but we have signed up to another trial from a different provider, so I will keep on investigating. I can see a time when it is mandated for medico-legal reasons where there is a dispute between and doctor and a patient about what is said, although for now at least, the original transcript which is held in UK data centres, is deleted after 30 days. There is an option where I can simply dictate my consultation notes, but using AI to do speech to text is like using a Tesla Cybertruck to drive around your neighbourhood. It’s ridiculously over-powered, over-spec’d, and over-priced for the task.

AI is a solution in search of a problem. We’ve got too much of it, so we’re trying to put it in everything. It has come along so fast, that we didn’t stop to diagnose the problems we really have and figure out ways to solve them. Before AI came along, I don’t remember ever thinking that typing consultation notes was the bugbear of my day. On the contrary, these tiny, reflective, creative acts are enjoyable. I am not alone in suspecting that AI wants the creative work that I enjoy, making my days even more intense and complex than before.

Energy consumption by AI is vast. A Chat GPT query requires on average ten times more electricity than a typical Google search. EOAI 275 Minutes of the UK AI Energy meeting June 2025 state that data centre electricity demand is projected to double by 2030 and nearly triple by 2035, becoming equivalent to the total electricity demand of Japan.

In the US at the current rate of development data centres are predicted to consume 8% of total electricity compared with 3% at present. The December minutes of the UK AI Energy meeting state ‘Senior officials underlined the urgency of clearing barriers to energy supply, the need for rapid and practical delivery, and the importance of acting within tight timescales given rising electricity demand.’

Globally, most of the electricity (outside of China) is going to be produced by fossil fuels. In addition to this existential threat of global warming, the consumption of fresh, potable water for cooling data centres is already having a significant effect on the availability of drinking water, especially in drought prone areas, but also along the M4 corridor in the UK where home building is being cancelled to make way for UK data centres. At the time of writing the escalating conflict in Iran and surrounding countries has led to a surge in energy prices that may continue for years.

Given its environmental impact it is extraordinary that AI is being introduced so widely, without any assessment of whether the benefits are worth the costs, or whether any other technology might be appropriate. To give a simple example, the problems that confront me every day as a GP such as slow/ unreliable IT, fragmented care, waiting times, poor communication between actual people in specialties and departments including hospitals, social care and third sector, difficulty maintaining continuity, staff sickness and disputes, long waits for hospital appointments, chronic pain, mental illness/ distress, poor housing and other effects of deprivation, long referral forms, messy problem lists, medical waste (excessive medical tests and interventions), environmental waste (medications, energy, paper, etc.) are not solved by AI.

Other significant concerns are the impact on staff since we are being encouraged (strongly) to use it to do admin work done by administrators and receptionists. The likely outcome is fewer jobs for healthcare administrators and receptionists and because GPs will have to do more reading, we will likely be more isolated in our rooms in front of our computers.

AI cannot be separated from the handful of US companies that own and control it, or the US government under Trump with whom they are closely aligned, which had a lot to do with the administration’s denial of climate change and gung-ho approach to the energy production and the environment.

Palantir owned by Peter Thiel and Alex Karp has been given nearly £330m worth of NHS contracts and according to a report in the Guardian on 5 Feb 2026, the UK Government has repeatedly blocked attempts by campaigners and MPs to find out details of contracts, including deals made with Boris Johnson and Keir Starmer. The Information Commissioner is investigating a refusal by the Department of Health and Social Care in June to release official reports about Palantir’s NHS federated data platform on the grounds that confidentiality is needed to allow the formation of government policy.

The US Foreign Intelligence and Surveillance Act (FISA) gives law enforcement and intelligence the right to access data anywhere in the world if held by a US company and to date there have been no assurances that NHS data will be protected. In the US Palantir are providing the software for 911 calls and data management for Medicare and patient data has been used to direct ICE agents to areas where there are suspected immigrants who have been aggressively targeted and even murdered.

The British Medical Association has had a sustained objection to the role of Palantir in the NHS and voted in its ARM in December 2025 to scrutinise and halt any further involvement of Palantir in handling patient data. NHS England’s medium term planning framework, published in October, said all trusts should be using FDP core products from April.

A company which is profiting from war, is closely associated with far-right ideologues, that cannot guarantee NHS sovereignty of patient data, and whose contacts are hidden from public scrutiny is being given access to the world’s most comprehensive and valuable patient records. MPs, journalists, campaigners, and the BMA are being ignored and the majority of ICBs and NHS trusts are now using it. Concerns about transparency, mistrust, vendor lock-in, value for money and data security aren’t being taken seriously enough according to Chi Onwurah, a Labour MP and chair of the science, innovation and technology select committee.

The majority of GPs are eagerly and uncritically adopting AI in spite of the BMA’s position. While there are a number of UK companies providing the services for GPs the US tech giants have been ruthlessly assimilating smaller companies and so that may not last long before there are just a few or even one provider. The human, environmental and political risks are enormous and I am very concerned.

Selected references

https://www.theguardian.com/politics/2026/feb/05/calls-to-halt-uk-palantir-contracts-grow-amid-lack-of-transparency-over-deals

https://youtu.be/YftdpU4ElUQ?si=twlKsRjOFdX1FvYf

https://www.thenerve.news/p/palantir-technologies-uk-government-contracts-size-nuclear-deterrent-atomic-peter-thiel-louis-mosley

https://www.thenerve.news/p/technofascism-us-america-fascism-trump-palantir-peter-thiel-uk-nigel-farage-reform

https://youtu.be/IY1kS0Htk6I?si=9hG7wRTGaA0hlFY0

Strategic litigation against public participation, aka Lawfare, ie, The law protects those it does not bind and binds those it does not protect.

Time saving = work harder http://gosh.nhs.uk/news/implementing-ai-scribing-into-outpatient-clinics-to-enhance-care-and-reduce-burnout/

[i] https://www.gov.uk/government/news/ai-to-be-trialled-at-unprecedented-scale-across-nhs-screening